Searching out Trends in the Supercomputer Environment and

Technology Development Following the “K computer”

Interview with“ K computer” Developer

regarding Efforts in Exascale and

Coming Supercomputer Strategies

Executive Architect, Technical Computing Solutions Unit, Fujitsu Limited

Motoi Okuda

●Will the “K computer” class supercomputer be available onsite around the year 2015!?

━The era of the “K computer” has begun, but it is restricted to proposal-based usage and is not yet available to everyone. This is inevitable because there is no other supercomputer that has the calculation performance of 10 PFLOPS other than the “K computer” in Japan. However, I believe many researchers dream of having an advanced supercomputer environment available on demand. “FX10”, which is a commercial model of the “K computer” has been installed inside and outside Japan, but I have not heard of 10 peta class installations. With the current situation in mind, we’d like to hear how you feel about advancements in technology. When do you believe researchers will be able to freely use 10 peta class supercomputers? How will supercomputers that exceed the “K computer” be developed, and what are the challenges?

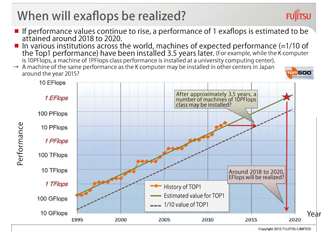

Okuda Looking at the history of the world’s supercomputing performance competition (first place in the TOP500), the performance of supercomputers has risen approximately 1,000 times over 10 years. At this rate, it is predicted that an 1EFLOPS (exaflops) machine will be developed around the year 2018 to 2020. This is a target also held by Japan. On the other hand, in order for supercomputers to be readily available to researchers, supercomputers need to be installed all over the world and their performance may be 1/10 of that of the top supercomputer. As an actual example, at the same time the “K computer” was completed, a new supercomputer system (Oakleaf-FX) that has 1.13PFLOPS performance, which is approximately 1/10 the peak performance of the “K computer”, was installed in the Information Technology Center of the University of Tokyo. Since performance values are improved 10 times in about 3.3 years, it is predicted that a supercomputer having the performance values of today’s leading TOP500 values will be deployed throughout the world in various institutions in approximately 3.3 years. Therefore, you may foresee that supercomputers with a calculation speed equivalent to the “K computer” will be available to universities and research institutions around the year 2015.

━ If 10 peta class supercomputers become available in the year 2015, does that conversely mean a supercomputer of 100 peta class would be developed by then?

Okuda All vendors have different views and approaches. We at Fujitsu are planning to follow Japan’s national project for future product development by using our experience in the development of the “K computer”. We also will provide operation assistance and support along with application optimization support for the “K computer” which will soon be operating officially. Another mission is to continue to maintain an environment that allows further advancement of software assets, while applications for the “K computer” are developed, tuned, and optimized by researchers. Like the provision of the commercial machine “FX10”, we are developing a model to be commercialized around the year 2014 to 2015 called the “100PFLOPS Level Trans-Exa System” where “Trans-Exa” means a bridge to exascale computing. Applications developed for the “K computer” and “FX10” will run on this machine as is. The architecture will be of the same concept, and the same programming model can be applied. Of course, higher performance will be produced if the program is recompiled. A CPU in “FX10” performs 1.85 times better than the “K computer” (peak performance), and has other functions that improve operability. In “Trans-Exa”, CPU performance, network performance, and also packaging density and power consumption levels are planned to be highly improved. Although the machine will have the ability to provide up to 100 peta, we assume the number of full 100 peta scale installation would be limited. Nevertheless, onsite supercomputer environments of the several peta class should be ready for universities and research institutions around the year 2015.

●The road to realizing exascale

━ What are your views on coming trends in supercomputer development?

Okuda There has been an extremely large technology spurt in this year’s TOP500. This is marked by the power efficiency (performance per power) of the first place machine, “Sequoia”, of the United States. Until this year, the “K computer” held the leading performance out of the TOP1 machines, but “Sequoia” surpassed its records with 2,000GFLOPS/KW or higher with a large margin. To state it briefly, a highly power efficient supercomputer that has never been seen before, was born. However, the performance of a core in a TOP1 machine (LINPACK performance) hasn’t changed very much over the last five years (a core is a calculation unit located in the CPU). The “K computer” has a relatively high value of approximately 15GFLOPS, but Sequoia's values is lower and is approximately 10GFLOPS. Currently, the trend is to improve per formance by increasing the number of cores rather than improving the performance of the core itself.

━ So I understand that lowering power consumption and increasing the number of cores are two trends in technology. In other words, could you say that they also are the challenges faced in the coming exascale development?

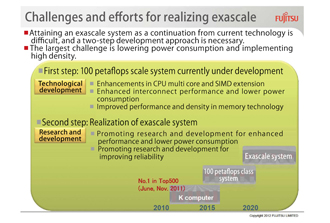

Okuda I agree. We are planning to realize exascale, through “Trans-Exa”, which I just explained, as the first step of technological development, and the second step will consist of research and development. The first step, “Trans-Exa” which is currently being developed, raises the performance of one core and also implements many-core processing. The “K computer” consists of 8 cores and “FX10” consists of 16 cores, and the next machine is planned to have even more cores. Along with improvements in CPU performance, we also plan to improve interconnection performance. We also are planning to lower power consumption, but this is our biggest challenge. Nevertheless, the development is moving forward with the goal of surpassing “Sequoia”. In order to attain a higher performance and density, we also plan to improve packaging density, which is the number of CPUs per dimension. We aim for exascale with these improvements which we define as the first step. During this period, we believe that there will be a big technology spurt. As predicted earlier, exascale is assumed to be achieved around the year 2018 to 2020, which is three to five years after the first step. To be honest, it is hard to predict changes in technology five years ahead. We can speculate trends for improvements in CPU calculation performance, but lowering power consumption is unclear. Technologies required for a 100PFLOPS class machine which is under development have mostly been revealed, but improvements on the current technology itself will not be sufficient to bring out 10 times the performance, and therefore we are awaiting the birth of new technologies. In addition, research and development for improving reliability will also be necessary for realizing exascale.

Parallel processing abilities will need to be improved in exasystems for application development regardless of the form it takes. It may be necessary to change the programming model. We still have not untwined the entirety of exascale. Therefore, we believe the 100PFLOPS scale system will be a platform to prepare us to advance to the future exascale. Along with progress in multi-core technology, and research and development in discovering usage of machines with SIMD capability, we hope to advance towards the future.

|

|

When will exaflops be realized? |

Challenges and efforts for realizing exascale |

●To develop a supercomputer that leads the world

━ So that means the machine which will become “Trans-Exa”, will be regarded as a test model for future exascale machines for engineers and researchers.

Okuda In the case of the “K computer”, application development projects were initiated along with the commencement of the project. The HPCI Strategic Project started in the year 2011. What should be done using exascale should be revealed once preparation for the next step kicks off on a 100PFLOPS scale machine in the latter half of the HPCI project.

━ Yes, we’re no longer in the times when calculation speed would be enhanced solely by improvements in hardware.

Okuda As the phrase “Co-Design” states, this is the age for engineers and researchers to work together to design and develop machines and applications. Like the grand challenge application developments for the “K computer”, a preparation period is necessary, or else we will be missing applications to make use of the machine's performance when the exascal emachine is completed. Preparation started four to five years before in various fields in the case of the “K computer”, and we have now reached a stage where we can start seeing the fruit of their achievements. If positive results are available in the years 2013 and 2014, they will encourage progress for future research and development, and conversely, the development of another machine for moving onto the next step along with the “K computer” will be very important. We are developing a 100PFLOPS scale machine with this vision in mind.

━ “FX1” was released just before the “K computer”, and since their architecture was similar, several research institutions and universities installed the machine early on. From your explanation, you want “Trans-Exa” to be used as a preparation for exascale in a proper manner, correct?

Okuda Yes, even if a machine with high performance is available, you will not be able to produce performance values without that level of preparation.

━ What is the largest challenge in the second step in realizing exascale?

Okuda I feel that lowering power consumption will be the largest concern. Semiconductor technologies have advanced and it is possible to increase the number of arithmetic circuits in a chip to improve CPU performance. However, if the arithmetic circuit in this high performance CPU is used for all calculations, an outrageous amount of power will be consumed, and the machine cannot be operated efficiently. Therefore, the challenge is to lower power consumption. It is difficult to develop a circuit that can calculate without using much power, but we must overcome these obstacles. Our desire and mission is to attain exascale, regardless of the unclear and unknown, through various technological developments.

━ As a developer, you cannot keep yourself from trying to aim higher.

Okuda Yes, I believe we must continue to do so. We’ve heard from researchers that they cannot go back to their old environment once they started using the “K computer”. When the development first started, there were questions such as “Is 10 peta really necessary?” and “Are there applications that can be used? ” After five years, I believe the situation has totally changed. Furthermore, Japanese researchers need some “strength” to fall back on to discuss equally with workers from across the world. People all over the world are interested in the “K computer” and are awaiting research achievements through the “K computer”. Therefore, we must continue to develop a machine that can be praised on a global level.

|

BioSupercomputing Newsletter Vol.7

- SPECIAL INTERVIEW

- Interview with “K computer” Developer regarding Efforts in Exascale and Coming Supercomputer Strategies

Executive Architect, Technical Computing Solutions Unit, Fujitsu Limited

Motoi Okuda - Large-scale Virtual Library Optimized for Practical Use and Further Expansion into K computer

Professor, Department of Chemical System Engineering,

School of Engineering, The University of Tokyo

Kimito Funatsu

- Report on Research

- Old and new subjects considered through calculations of the dielectric permittivity of water

Institute for Protein Research, Osaka University

Haruki Nakamura

(Molecular Scale WG) - Development of Fluid-structure Interaction Analysis Program for Large-scale Parallel Computation

Advanced Center for Computing and Communication, RIKEN

Kazuyasu Sugiyama

(Organ and Body Scale WG) - SiGN : Large-Scale Gene Network Estimation Software with a Supercomputer

Graduate School of Information Science and Technology,

The University of Tokyo

Yoshinori Tamada

(Data Analysis Fusion WG) - ISLiM research and development source codes to open to the public

Computational Science Research Program, RIKEN

Eietsu Tamura

- SPECIAL INTERVIEW

- Understanding Biomolecular Dynamics under Cellular-Environments by Large-Scale Simulation using the “K computer”

Chief Scientist, Theoretical Molecular Science Laboratory,

RIKEN Advanced Science Institute

Yuji Sugita

(Theme1 GL) - Innovative molecular dynamics drug design by taking advantage of

excellent Japanese computer technology

Professor, Research Center for Advanced Science and Technology,

The University of Tokyo

Hideaki Fujitani

(Theme2 GL)

- Report

- Lecture on Computational Life Sciences for New undergraduate Students

HPCI Program for Computational Life Sciences, RIKEN

Chisa Kamada